New DLA Will Accelerate the Inference Performance of Deep Convolutional Neural Network Models Used for Image Classification and Object Detection Applications

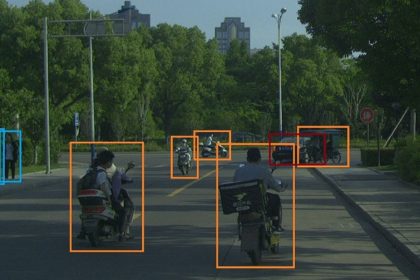

April 2, 2019. FABU Technology Ltd., a leading artificial intelligence company focused on intelligent driving systems, announces the Deep Learning Accelerator (DLA), a custom module in Phoenix-100 that improves the performance of object recognition and image classification in convolutional neural networks (CNN), a key component of computer vision. The DLA can be customized for a broad range of models, including the computation of high data volumes from cameras and sensors to enable high-performance object detection for self-driving vehicles.

Convolutional neural networks, which are specialized algorithms that can perform image processing and classification, have revolutionized a variety of computer vision tasks, including object detection and facial recognition. To perform image classification, the CNN takes input image data (usually derived from sensors) and analyzes it for low-level features such as edges and curves. The image data is then passed through a series of convolutional, nonlinear, pooling, and fully connected layers to get an output, which can be a single class (dog or cat, etc.) or a probability of classes that best describes the image.

To improve the accuracy of computer vision, new CNN models are evolving with deeper and wider layers to better extract features from the input image. Because these state-of-the-art CNNs require multiple different types of layers with varying layer sizes and branches, acceleration platforms based on generic, one-size-fits-all ASICs find it a tremendous challenge to effectively boost CNN performance. The ever-increasing data volume from high-definition sensors, such as cameras, LiDAR and mm-wave radar, further escalates the problem in resource-constrained systems such as a self-driving vehicle. Therefore, the ability to customize the ASIC layer architecture is vitally important for a DLA if it will be successful in helping achieve higher performance object detection.

The FABU Phoenix-100 is a 28nm ASIC design for a coarse-grained DLA which can be reconfigured for a diverse range of CNN models. The key components of the DLA are multiple coarse-grained modules that provide volumetric customization of various CNN computing primitives. The dimension of these modules and the size of the input/output buffers are optimized to balance the computation and memory bandwidth requirements for a set of object detection algorithms that are commonly used in self-driving vehicles. This DLA design presents high inference performance comparable to high-end GPUs, such as the Nvidia Titan X, but with about 40x power consumption reduction [1].

The Phoenix-100 has demonstrated the ability to accelerate the inference of several state-of-the-art deep CNN models used for image classification and object detection applications. As demonstrated by many prior tests, 8-bit fixed point is sufficient for CNN inference to achieve the same accuracy level as the floating point format. The performance on different object detection networks is also compared with the Nvidia Titan X GPU, as shown in Figure 4. The GPU performance of 32-bit floating point are obtained directly from the original works [2][3][4]. To make a fair comparison, the INT8 GPU performance is also estimated based on the results in [5] that INT8 can provide around 1.3x speedup compared to FP32 on Titan X GPU.

The FABU DLA test chip is manufactured with TSMC 28nm CMOS technology. The proposed DLA 2.0 is projected to perform object detection at more than 40 frames per second (fps) for 2MP (1920 x 1080) images. The power consumption of the entire SoC is expected to be 5 W.

References:

[1] Angelini, Chris, “Update: Nvidia Titan X Pascal 12GB Review” https://www.tomshardware.com/reviews/nvidia-titan-x-12gb,4700-7.html

[2] W. Liu et al., “SSD: Single Shot MultiBox Detector,” In ECCV, 2016.

[3] J. Redmon et al., “YOLOv3: An Incremental Improvement,” In arXiv:1804.02767, 2018.

[4] S. Ren et al., “Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks,” NIPS, 2015.

[5] S. Migacz, “8-bit Inference with TensorRT,” Nvidia, 2017.

[6] D. Franklin, “Jetson Nano Brings AI Computing to Everyone,” https://devblogs.nvidia.com/jetson-nano-ai-computing/, 2019

For more information, please contact Angela Suen, Head of Marketing, Angela.suen@fabu.ai, +1-408-475-2556.

SOURCE FABU http://fabu.ai/en/